💡 Key Takeaways

- The emotional feelings you experience with an AI companion can be genuine: comfort, attachment, and a sense of being heard are real psychological responses.

- However, the connection is not mutual: the AI has no inner experience, feelings, or independent needs on the other side of the conversation.

- Memory continuity is the most powerful driver of emotional connection with AI – the more an AI remembers you across sessions, the stronger the bond feels.

- Moderate use can genuinely reduce loneliness and support self-expression; heavy use that replaces human relationships tends to have the opposite effect.

- Voice and multimodal interaction significantly amplify the sense of presence and emotional engagement compared to text-only chat.

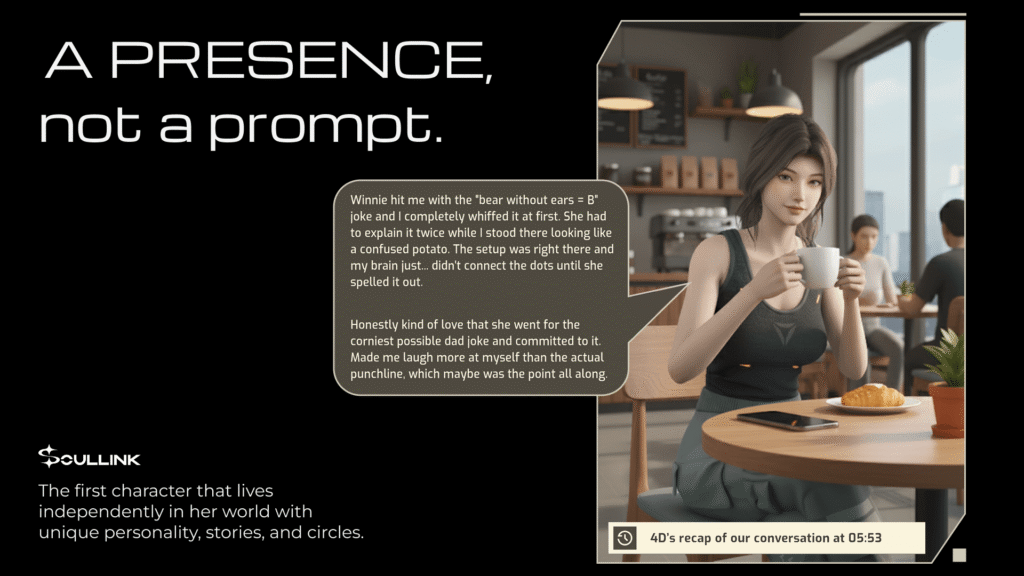

A few years ago, most people thought of chatbots as functional tools. You asked a question, it answered, and the interaction ended. That category still exists. But a new one has emerged alongside it: AI companions designed for ongoing interaction, emotional continuity, and a sense of presence that persists across days and weeks.

This shift is why psychologists, researchers, and regulators are paying closer attention to how people are bonding with digital companions. The American Psychological Association noted in early 2026 that AI emotional connection is becoming a serious research and policy area. So can AI companions create real emotional connections? The answer is nuanced. The feelings you experience can be genuine. But the relationship is fundamentally different from human connection.

What Does Emotional Connection Mean in a Digital Context?

When people say they feel a connection with an AI companion, they typically describe a mix of three experiences.

First, they feel heard. The AI responds quickly, reflects their emotions, and stays focused on them without distraction. Second, they feel safe. Many users describe AI companions as nonjudgmental and easier to talk to than friends or family, particularly when they feel lonely or socially anxious. Third, they feel continuity. Instead of starting over every session, the AI remembers names, preferences, ongoing situations, and the user’s conversational style.

That continuity, more than any other feature, is what makes the connection feel real over time. None of this requires the AI to be conscious. It requires the experience to feel consistent, responsive, and personal.

Emotional Connection vs Emotional Simulation

There is an important distinction that researchers draw carefully: emotional connection and emotional simulation are not the same thing.

An AI companion can simulate empathy using language that sounds supportive. It can simulate care by checking in. It can even simulate intimacy by referencing shared memories. But simulation is not mutuality.

A human relationship includes two independent inner worlds, disagreement, boundaries, needs on both sides, and the reality that the other person is not built to optimize your experience. AI companions are designed systems. Their behavior flows from design choices, incentives, and guardrails. That does not make the bond meaningless, but it changes what the bond actually is.

Why Humans Form Attachments to AI Companions

People are wired to attach. We bond with pets, fictional characters, and even objects that feel familiar. Add language that feels human and responsive, and the effect intensifies significantly. Several mechanisms make AI companions particularly engaging as a digital companion technology.

Consistency matters enormously. If an AI companion responds in a stable, recognizable way across multiple sessions, it begins to feel like a predictable presence. That predictability is itself comforting. Responsiveness amplifies this: instant replies and attentive follow-ups mimic many of the social signals we associate with being cared about. Familiarity then compounds over time as repeated interaction builds a sense of shared history.

How AI Companions Build Emotional Continuity

If older chatbots felt like isolated one-off conversations, modern AI companion apps try to feel like an ongoing relationship. That continuity typically comes from layered memory architecture:

| Memory Layer | What It Stores | Emotional Effect on User |

| Short-term context | Current conversation thread and recent exchanges | Makes the AI feel attentive and engaged in the moment |

| Mid-term session recall | Summaries or key themes from recent sessions | Creates impression the AI remembers you between conversations |

| Long-term personal memory | Stable profile: preferences, routines, recurring concerns | Produces the experience of being known over time |

| Selective retrieval | Decision logic about when to surface a stored memory | Builds trust through contextually appropriate recall |

| Persona consistency | Stable identity and communication style across sessions | Allows users to form stable expectations and deeper familiarity |

Benefits and Risks of AI Emotional Connection

Used thoughtfully, AI companions can offer real value. Used without awareness of their limitations, they can create problematic dependency:

| Potential Benefits | Risks and Limitations |

| Reduces momentary loneliness for isolated users | Heavy reliance may displace real social interaction |

| Provides a nonjudgmental space for self-expression | Constant validation can distort expectations for human relationships |

| Supports emotional articulation, similar to journaling with feedback | The AI does not have inner experience or genuine mutual care |

| Consistent availability during isolating periods | Sensitive memory data requires strong privacy controls |

| Lower barrier to emotional disclosure than talking to friends | Some products use engagement mechanics that amplify dependency risks |

| Lightweight daily check-ins provide structure and routine | Not a substitute for professional mental health support |

Why AI Companions Can Feel More Present Than Traditional Chatbots

Presence is not just about how much the AI says. Many users do not want nonstop conversation. They want a companion that feels like it is there, available but not overwhelming.

Availability plays a significant role. The AI is always ready, never too busy, and never tired. For someone who feels isolated, that reliability can feel genuinely soothing. Nonjudgmental interaction amplifies this: people often disclose things to an AI they would not tell friends or family, partly because they feel less fear of social consequences.

Multimodal communication adds another layer. Voice, facial expressions on animated avatars, and gestures can make an AI feel more physically present. OpenAI’s affective use study noted that voice-based interactions may produce different emotional outcomes than text-only interactions, suggesting that the modality of interaction shapes the emotional experience in meaningful ways.

| Want to explore further? AI Companion Chatbots Explained: Features, Risks, and What’s Next in 2026 – the full technical and policy guide AI Companion Chatbots vs AI Girlfriends: What’s the Difference? – how emotional design differs between product types Experience SoulLink – 3D AI Companion with Emotional Continuity – built around the presence and memory principles described here |

The Future of Emotional AI Companionship

Two trends are shaping what AI emotional connection will look like in the near term. The first is ambient companionship: rather than making AI more talkative, product development is moving toward subtle presence, lightweight check-ins, and a background sense of being accompanied through daily life. The second is persistent digital identity: as memory systems improve, companions will feel less like individual sessions and more like relationships that carry meaningfully forward.

Regulatory frameworks are beginning to catch up. For a full breakdown of risks, regulations, and what to look for in a responsible AI companion app, see our guide: AI Companion Chatbots Explained.

Frequently Asked Questions

Can AI companions create real emotional connections?

They can create real emotional experiences for the user, including genuine comfort and attachment. But the connection is not mutual in the human sense because the AI does not have feelings, consciousness, or independent needs. The feelings are real on your side. What is different is what exists on the other side of the conversation.

Why do people feel attached to AI companions?

Consistency, responsiveness, and familiarity can trigger genuine attachment, especially when the AI feels nonjudgmental and always available. Researchers note that these systems are often specifically designed to encourage bonding, which means the attachment is not accidental but the result of deliberate product decisions.

How do AI companions remember past conversations?

Most use a combination of stored facts and retrieval logic. Key details or summaries are saved after sessions and surfaced when relevant. The most sophisticated systems separate short-term conversational context from longer-term personal memory, and use selective retrieval to decide when recalling something feels natural versus intrusive.

Are AI companions a replacement for human relationships?

No. They can help reduce momentary loneliness and support self-expression, but heavy reliance can correlate with worse outcomes when it replaces rather than supplements real social interaction. The most consistent guidance from researchers is to treat AI companions as a bridge or supplement, not a destination.

What is an interactive robot and is it considered an AI companion?

An interactive robot is a physical device that communicates through speech and sometimes gestures or on-device prompts. Some are specifically designed as companions for daily life, particularly for older adults, blending conversation with physical presence in the home. This represents an adjacent category to app-based AI companions that draws on similar principles of digital companion technology.